When data feels certain, but decisions are not.When data feels certain, but decisions are not

By Michael Flock, Flock Advisors

The most dangerous moment in a deal is often the moment you win it. The model works. The numbers support it. The assumptions feel justified. And only later does reality appear.

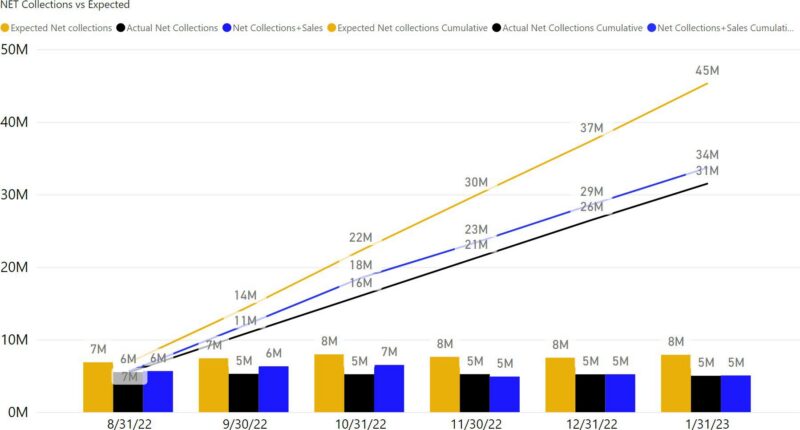

The portfolio bids are due at the end of the day. Beautiful spreadsheets and glittering graphs fill the screens. Robust models test assumptions. Curves carefully constructed. The data is comprehensive. The analysis is rigorous. One last adjustment is made. A collections assumption moves slightly higher. Confidence in the seller helps justify it. The model still works. The bid goes in. And the firm wins the portfolio. The team “high-fives”.

In a data-driven business, that moment feels like validation. The model was right. The assumptions held. The numbers justified the decision. Months later, performance begins to diverge. Collections lag. The curves flatten. Something not captured in the data begins to surface. A year later, the investment is written down.

The model wasn’t necessarily wrong. But the decision was. Why? “We used AI!”

When Data Looks Right, but the decision is wrong.

Models reflect history. Outcomes depend on what changes.

In theory, underwriting a portfolio, or evaluating any investment, is empirical. Data is collected. Models are built. Outcomes are projected, increasingly with AI. In practice, the final decision is rarely purely empirical. Assumptions shift. Confidence varies. Views about the seller, the asset class, or execution shape how the data is interpreted. The model may be objective. The decision rarely is.

Bias does not only affect interpretation. It affects inquiry. When a team wants a deal to work, certain questions are asked with less urgency. Others are never asked. Diligence becomes confirmatory rather than skeptical. The absence of disconfirming evidence is mistaken for validation. The analysis can be thorough. But the judgment is not.

Even sophisticated models carry a quieter risk. They rely on many variables. But those variables are not uniformly available or equally reliable. The output appears precise. The inputs are not always comparable.

Credit scoring offers a familiar example. A single number represents risk, yet some consumers have deep histories while others have limited data. The score appears consistent. The underlying information is not.

Debt models face the same constraint. The model may be precise. The inputs are not always complete. Even when interpreted correctly, data is incomplete. The most consequential variables are often the ones not captured. Changes in strategy, shifts in behavior, dynamics that never enter the dataset. The greatest risk is not always in the assumptions we make. It is in the assumptions we don’t realize we are making.

The same data can support different conclusions. Analysts working from similar information often arrive at different forecasts, especially when conditions are changing. Historical relationships do not always hold, and context shifts. The data is shared. The interpretation is not.

But, precision has a way of encouraging confidence. In data-rich environments, there is a temptation to trust the beauty of the model, the clarity of the charts, the coherence of the projections. Analysis can drift into conviction. The more precise the presentation, the less it seems to invite doubt.

The perils of precision are not theoretical. In the years leading up to the 2008 financial crisis, some of the most sophisticated institutions relied on models that appeared rigorous and precise. The output was elegant. The risk seemed manageable. The data was real. The conclusions were confident. And the outcome was profoundly wrong. Better data does not eliminate uncertainty. It can mask where uncertainty resides.

The model can be precise. The output compelling. But the final step, interpretation, judgment, the questions asked or not asked, remains human. That is another example of the seduction of AI, and its interaction with the Human Algorithm.

The model does not eliminate judgment. The problem is not that we experience wins and losses. It is that we decide too quickly what they mean. In investing, in leadership, and in life, the score is rarely settled in the moment.